Log and trace management made easy. Quickwit Integration via Glasskube

Why nobody grows up wanting to be a DevOps engineer

Glasskube Beta is live.

Why contributor guidelines matter.

10 Steps to Build a Top-Tier Discord Server for Your Open Source Community. ✨

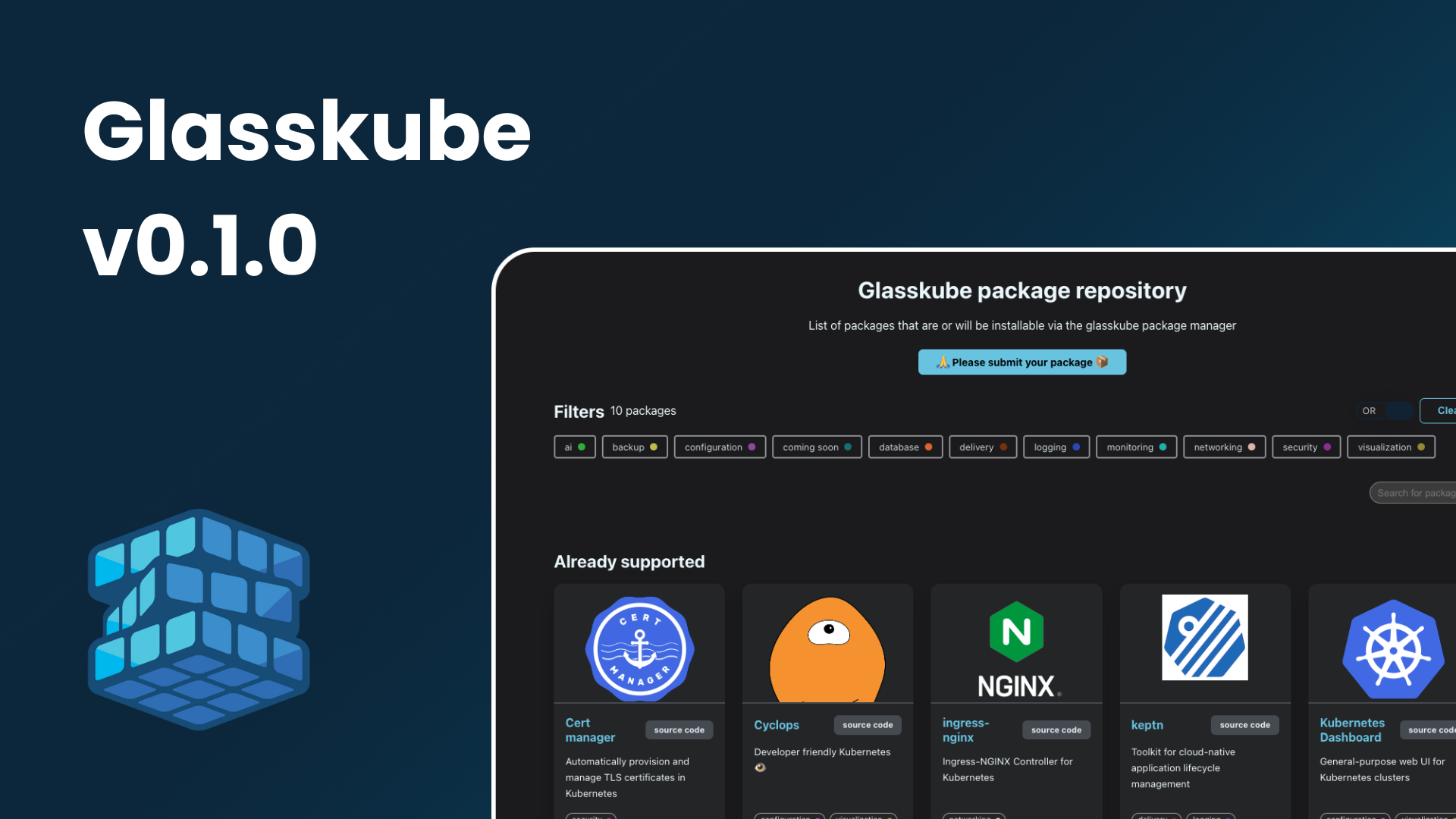

Glasskube v0.1.0 — Introducing Dependency Management

Glasskube v0.0.3 — Introducing Package Updates

The Inner Workings of Kubernetes Management Frontends — A Software Engineer’s Perspective